Over the past two years, generative AI has emerged as one of the key drivers of technological transformation in businesses.

The emergence of models like ChatGPT has profoundly changed the way organizations view artificial intelligence. Whereas AI projects were once limited to analytical or predictive use cases, they are now being integrated directly into business processes.

Virtual assistants for employees, content assistants, and automated customer service: generative AI is gradually transforming the workplace experience.

But this trend also highlights a reality that is often underestimated: the real challenge is no longer just the model itself, but the architecture that enables it to be used effectively—and securely—in an enterprise setting.

From model training to system architecture

For more than a decade, AI projects have been dominated by a model-centric approach.

Data teams trained algorithms using data to solve specific problems.

With large language models, this logic changes radically.

Companies no longer necessarily develop their own models. Instead, they rely on existing platforms, such as the Azure Open AI Service, to access advanced AI capabilities.

The crux of the problem then becomes an architectural one: how can these models be integrated into existing information systems?

LLM Architecture: A New Frontier in Engineering

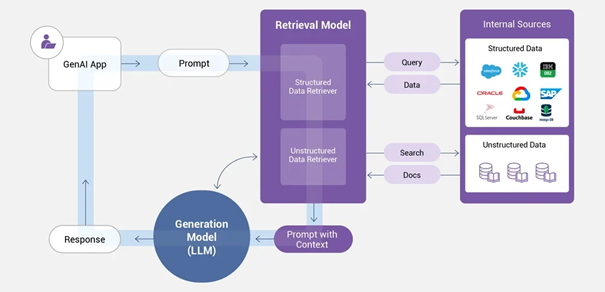

Generative AI systems rely on a combination of technological components:

- language models such as GPT-4, Claude, Mistral…

- vector databases for semantic search (Azure AI Search, Q-Drant, PG-Vector…)

- RAG architectures that enable the integration of AI with internal data

- orchestration frameworks such as Lang Chain, Semantic Kernel…

- security, governance, and multi-tenant management layers

These building blocks must be integrated into applications that are reliable, secure, and capable of operating at scale.

This complexity explains the emergence of a new role within technical teams: the engineer specializing in LLM architectures.

A hybrid profile that is still rare

This new engineering field lies at the intersection of several disciplines:

- software architecture

- cloud computing

- data engineering

- artificial intelligence

Unlike traditional machine learning roles, this position does not involve training models, but rather designing and orchestrating complete generative AI systems while taking into account real-world business constraints: security, multi-tenancy, observability, and integration with existing systems.

This hybrid nature explains why these profiles are still relatively rare on the market.

The Strategic Importance of GenAI Architectures

As companies roll out AI-powered assistants and virtual assistants, large language model (LLM) architectures are becoming an essential component of information systems.

Organizations that succeed in scaling up these architectures will gain a significant competitive advantage: an enhanced ability to leverage their information assets and automate high-value-added tasks.

In this context, the engineering of generative AI systems is gradually emerging as a new strategic discipline within software engineering—and a key role for the technical teams of the future.